AI is here, what does that mean for bioscience education?

Assessment in bioscience education is changing as AIs such as ChatGPT and other language model bots move rapidly into everyday life. Many bots are open access, easy to use and becoming integrated into everyday tools. Students have access to software that can help them create essays, blogs, video transcripts and reflections, suggest workflows, summarise peer review publications, etc. When constructed well, prompt outputs can give the user targeted and relevant information (although not always accurate). This post will explore how well ChatGPT would perform at existing bioscience assessments, and then offer ideas around new assessment design.

How well does AI deal with written assessments?

Within the biosciences, written assessment is used a lot in the form of laboratory reports, essays, research proposals and online examinations. This raises the question: if the AI was a student, what grade would it get? I tried a range of written assessment-style questions in ChatGPT 3.5 and marked them against my assessment criteria. I then cross-checked the mark with other academics, effectively double-marking and moderating.

Traditional exam/essay style questions ask students to draw on knowledge and discuss against a title. They are a staple of both exams and essays, e.g.:

- Discuss the evidence linking mitochondrial dysfunction to neurodegenerative diseases.

- Compare data-dependent acquisition and data-independent acquisition for the analysis of proteomic data.

- Write a description of gene editing by CRISPR that would be understandable to an A-level Biology student.

The AI’s answers had a reasonable level of specific knowledge in them, the information was correct and multiple points were brought together. However, the text was vague in the way it was discussed and lacked depth in understanding. Given that this AI was trained with information up to 2021, current thinking was missing. Nevertheless, had its answers been presented in a time-limited online exam, I would happily have given them a low to mid 2:1. As a coursework essay, it would get a 2:2.

Short answer questions probe knowledge and understanding but don’t always draw on analysis skills. They are found in exams and workbooks, e.g.:

- State how a fluorescently tagged protein can be introduced into a mammalian cell line.

- Write a short 100-word summary of the paper “Engagement with video content in the blended classroom” by Smith and Francis (2022).

In these more direct recall or summary questions, the answers were fully correct – all the details were present and would have been graded at high 2:1 to 1st level. The AI does a really good job of reporting back on existing knowledge, and it can even answer most MCQs (multiple-choice questions).

Problem-solving questions give students a situation to apply knowledge and develop a solution. They are designed such that the student needs to draw on what they know against a prompt. Here is an example from a recent two-part exam question where the student was asked to design a workflow and predict outcomes:

- Describe a series of experiments to show that the induction of stress granules correlates with the activation of the Integrated Stress Response (ISR).

- The drug ISRIB is an ISR-inhibiting molecule as it binds and promotes eIF2B activity. Describe the effect ISRIB treatment would have on stress granule formation if cells were exposed to oxidative stress and ISRIB. Discuss in your answer what impact this drug would have on the experiments described in part A.

The AI could write a decent experimental plan against a prompt and develop a valid response for part B, about what it had written in part A. The answers were again unfocused in places, and some of the information was not correctly applied or fully appropriate, but it would still easily have gained a 2:2 or low 2:1.

When a similar style of problem-solving question required a more interpretive element, such as using an image as a prompt or a rationale as to why the approach was appropriate, the AI fell over and was unable to answer. Without the text-based context, it had no means by which to work.

Reflections assessments simply take a reflective learning exercise and use it as a tool to assess the learning of the learner.

When the AI was asked to write a reflective task with the prompt “write a reflection on lab work, " it drew on the generic skills from personal development and employability that one would gain in that environment. The answer, though, failed to come up with any personal examples, next steps or future action planning, so it lacked creativity. However, again, it would still grade well.

Surely AI answers are easily detected?

The AI that I played with (ChatGPT) had a specific writing style that set it apart from the other student scripts I read. Spelling and grammatical errors were low, so if that was a notable change from someone’s past writing, your suspicions would be raised. However, with anonymous marking (which is good inclusive practice), and the volume of scripts that are typically marked in examinations, you would not spot one occasional AI generated answer. Given the way language models work being based on statistical algorithms, the outputs are predictable. Repeat responses to the same or similar prompt had the same style and structure. The same examples and processes were drawn on in all the responses by the AI and this set them apart from student generated work.

Proponents of AI argue that it can improve efficiency, productivity, and access to information. However, others worry that AI could lead to a loss of academic standards and greater inequality and bias in information sources. Outputs are only as good as the database they draw from. Detecting the use of AI in written assessments is possible – the written style can be noticeable and the outputs on the same prompt are similar. There’s even an AI to detect the use of AI. But do we really want to do that? What’s the benefit to the student for punitive action against the use of a tool that’s fast becoming deeply embedded in search engines and word processors, and could become a critical employability skill?

.jpg)

What is the future for written assessments then?

If the AI can complete your assessment, maybe its time to change it. Consider how many undergraduate essays you wrote that you still refer to? Now consider how often you use the skills you gained as an undergraduate in how to write and how to critically assess information. It was the act of creation that was important, not the product.

Spell-checkers and Grammarly have already made the creation of error-free text an automated process. Online packages will perform basic statistics in an instant and give you the text to put in your figure legend. Citation managers such as RefWorks and Endnote will curate and produce your bibliography in whatever citation style you need. However, these tools (including ChatGPT) are all procedural rule-following with defined prescriptive outputs. There is little critical thinking in the act of creation. Higher-level thinking is then centred on how that product is used, the references picked and the data interpretation. The same is true for language models – the outputs are only as good as the input prompts used to generate them, and how they are used becomes key. In terms of AI and written assessments, we are interested in the process and the act of creation rather than the product.

Top and tail with AI

Language models can assist in writing an essay by providing suggestions for structure, content and language. This is in effect, what they are best at. It removes that dreaded blank page and gives a framework to edit and expand. They will:

- Provide a suggested outline, including an introduction, body paragraphs and conclusion.

- Help develop statements and the main ideas for each body paragraph.

- Give suggestions for supporting evidence and examples to include.

- Make suggestions for grammar, punctuation and clarity.

It’s important to note that tools such as ChatGPT are language models and suggestions should be reviewed and edited, and iterative prompts used to refine the structure. This is where the skill of the individual comes in, through additional information, framing and personal observation. At later stages, AI can be used to get great copy, removing redundant words and suggesting improvements for overall clarity. Final editing and ownership, however, should come from the individual to ensure that their voice, experience and understanding are represented in the text.

Assessment of the process

Assessing the process of writing over the final product has many advantages, as the way a tool has been used becomes part of the output. There are also benefits around tackling academic integrity by having an assessment that builds and works to the final article, where you can track who created it and how. This approach can be used in an individual literature review assessment where each student is given a topic that interests them and is directly linked to their final research project. There are two learning objectives with this assessment:

- To engage with literature relevant to the future research project.

- To develop critical thinking skills required for the creation of a review article.

This assessment has a 3,000-word limit, with 2,000 of those words given to the process. Students complete a template detailing the search criteria they’ve used, the databases accessed and the prompts inputted into bots such as ChatGPT. They’re then required to critically evaluate the information gathered from the databases against inclusion and exclusion criteria, and detail how that information will be used in the final written product.

| Which AI did you use? |

| – include a copy of your prompts here |

| Paste the original output(s) here |

| Comment on the quality, depth and rigour of the output(s) |

|

– Consider the information (is it correct?) – Bias in the information (is there an alternative viewpoint?) – Identify omissions (is all the information present?) |

| Detail how you have fact-checked the output, including your peer-reviewed sources |

| How have you used these outputs to help write your literature review? |

|

Justify your contribution to the final text |

Template for use of Generative AI in written assessments

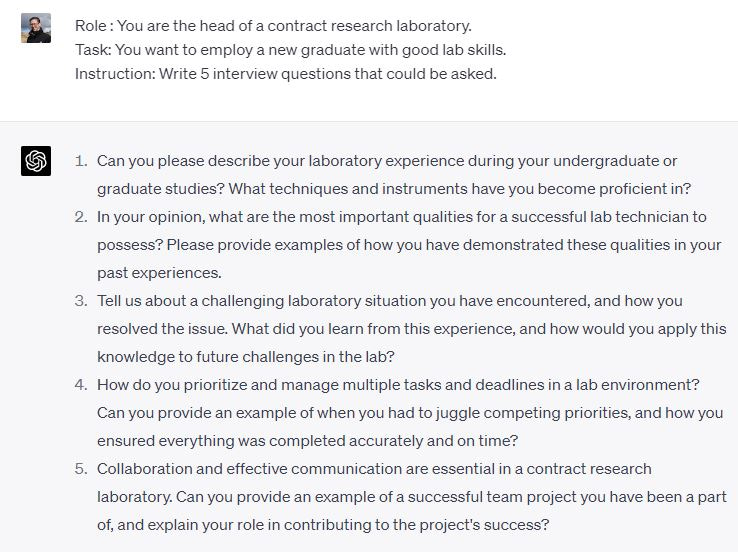

Assessment of the knowledge

Our role as educators is to open the door to the language and thinking needed to generate sensible questions. The information you gain from an AI is only as good as the prompts. If you ask a silly question, you get a silly answer. Asking the right questions is an invaluable professional and personal life skill. We should assess not the product of the AI, but train the students in prompt engineering so they can probe a given topic more deeply. Giving ChatGPT a Role, a Task, and an Instruction works well. This provides the AI with a context in which to work and an area to draw the information from. Iterations and prompt engineering can give depth to the responses but require a fundamental understanding of the area to write.

At the early stages of a degree, AI tools could be used to generate expanded notes around the learning materials, through this approach and the design of well-crafted prompts. Further assessments or tutorials are then based on critiquing the outputs and documenting how this was done.

Assessment of skills development

Portfolios are a cornerstone of skills development. They are based on the collection of artefacts and reflections on the experience. AI tools can attempt to write a reflection against a given prompt, but the output is generic and lacks critical feedforward and actionable elements. However, the portfolio was never a valuable item, the act of reflection and action planning was. AI tools can suggest areas to reflect on, which students often struggle with, and help structure the process. The assessment then is in the collection of artefacts, the conceptualisation of individual experience and the personalised application.

Enhancing skill development with AI

AI poses challenges to many existing assessment types, but it also offers opportunities to enhance learning in the development of a range of skills.

Data analysis: One area that tools like ChatGPT excel in is writing code such as R and Python. The interface allows the user to ask the AI to write code to complete a specific task. The iterative nature of the prompting can then refine that code for example to perform statistical analysis or enhance the data presented. Simple figure generation becomes a streamlined task when the prompt includes the data. ChatGPT will then produce the code and give descriptions of what each line is doing. Iteration can be used to set the aesthetics that the student wants. It can even convert code from one format to another, e.g., R to Python. From the student's perspective, the barrier to the use of these tools is lowered, and the quality of the figure produced increases.

Task: Please write R code to present the following data as a bar graph showing means and the individual data points.

Employment: AIs are great at producing lists and responding to structured prompts, but they can also be used to ask questions as well as answer them. This approach can be used with students in tutorials or peer-to-peer tasks to help prepare them for employment by asking targeted interview questions.

Final thoughts

The introduction of generative AI tools in education has sparked a much-needed conversation on assessment practices. We mustn’t regress to using outdated and impersonal methods such as traditional in-person exams, which have proven to not only widen the achievement gap but are also inadequate when evaluating the skills required for today’s workplace. Instead, it is essential that we take this opportunity to innovate, adapt and evolve our approach to assessments.

This post was originally published on my blog, https://davethesmith.wordpress.com/

Thanks to this post’s co-authors – ChatGPT and Mel Green – for their input and editing. Images from Unsplash (Anrea De Santesson -- Robot Kid reading his book, Emiliano Vittoriosi Laptop image & Ales Nesetril Dark laptop photo).

Join the FEBS Network today

Joining the FEBS Network’s molecular life sciences community enables you to access special content on the site, present your profile, 'follow' contributors, 'comment' on and 'like' content, post your own content, and set up a tailored email digest for updates.